Data is rarely perfect. In real-world datasets, missing values, inconsistencies, duplicates, and errors are common, which makes data cleaning a fundamental step in any data analysis or machine learning pipeline. Data cleaning ensures that the dataset is reliable, consistent, and ready for analysis, improving the accuracy and performance of models built on the data. (en.wikipedia.org)

Importance of Data Cleaning

A dataset full of errors or inconsistencies can lead to misleading insights. For example, missing entries in critical fields, duplicated records, or typographical errors can skew statistical measures and predictions. Cleaning data improves the quality, reliability, and interpretability of analyses. Moreover, well-preprocessed data ensures that downstream machine learning models train effectively without being affected by noisy or corrupt inputs.

Common Steps in Data Cleaning

1. Handling Missing Values: One of the most frequent issues is missing data. Using libraries like Pandas, missing values can be detected, removed, or imputed using strategies such as mean, median, mode, or predictive modeling. Proper handling ensures that no crucial patterns are lost or misrepresented. (pandas.pydata.org)

2. Removing Duplicates: Duplicate rows can distort results. Identifying and removing duplicates ensures that each observation in the dataset is unique, preserving the integrity of analysis.

3. Correcting Data Types: Ensuring that each column has the appropriate data type is crucial. For instance, numerical operations fail if a numeric column is stored as a string. Converting and standardizing data types facilitates accurate computations and visualizations.

4. Handling Outliers: Outliers are extreme values that can disproportionately affect model predictions. Detecting and treating outliers — either by removal or transformation — can significantly enhance model robustness.

5. Standardization and Normalization: Scaling numerical features to a common range improves performance in algorithms sensitive to feature magnitude, such as K-nearest neighbors, gradient descent optimization, and neural networks.

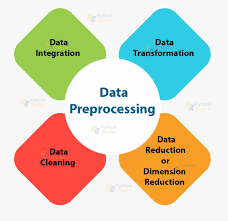

Data Preprocessing Techniques

Data preprocessing is closely related to cleaning and involves transforming raw data into a suitable format for modeling. Common techniques include:

• Encoding categorical variables into numerical forms, such as one-hot encoding.

• Feature engineering to create new, informative attributes.

• Text preprocessing for natural language data, including tokenization, stemming, and stop-word removal.

• Dimensionality reduction methods like PCA to reduce noise and simplify datasets. (Analytics Vidhya)

Tools for Data Cleaning

Python provides robust tools for cleaning and preprocessing data. Pandas is the go-to library for manipulating tabular data, NumPy supports numerical operations, and Scikit-learn offers preprocessing modules for scaling, encoding, and imputing missing values. Combined with visualization libraries like Matplotlib and Seaborn, data scientists can both identify issues and verify cleaning processes effectively.

Conclusion

Data cleaning and preprocessing are critical foundations of any data-driven project. Without clean, consistent, and well-structured data, analytical results can be misleading and unreliable. By systematically addressing missing values, duplicates, inconsistencies, outliers, and scaling issues, data scientists ensure that their models are both accurate and trustworthy. Mastering these steps allows professionals to transform raw datasets into actionable insights, driving informed decisions across industries.

References

1. Data Cleansing, Wikipedia (link)

2. Handling Missing Data, Pandas Documentation (link)

3. Data Preprocessing Techniques for Machine Learning, Analytics Vidhya (link)